Community-built robots you can build, run, and own

Mobile Robots

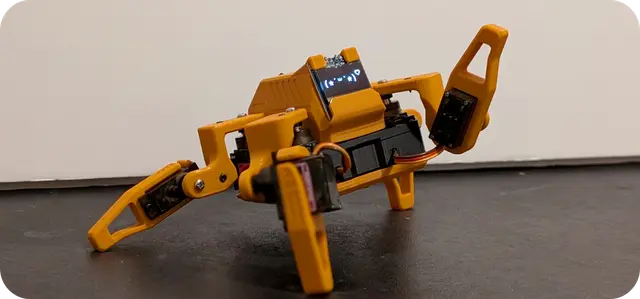

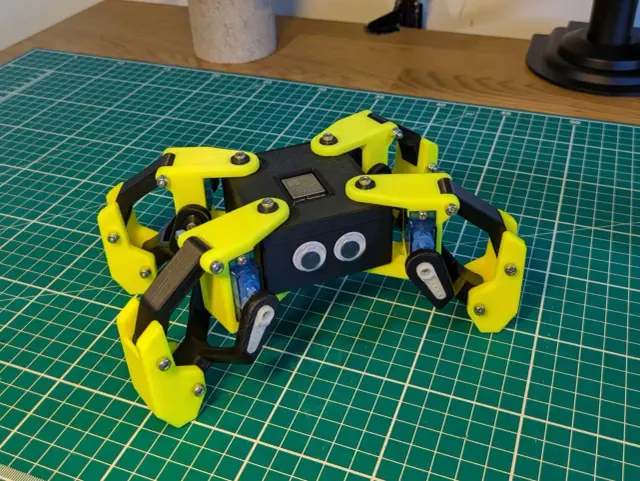

Mobile RobotsSesame Quadruped

Sesame is an affordable, open-source mini quadruped robot powered by an ESP32 microcontroller. Designed by Dorian Borian, Sesame uses 8 MG90S metal-gear servos (two per leg) for 8-DOF locomotion and features a 128×64 OLED display synced to movement. All mechanical parts are fully 3D-printable on a standard FDM printer (PLA, minimal supports). Hat variants available: enclosed, open-top, cat-ears. Printing This file list prints one complete robot. A few notes: Legs print x2. L1-L4 and R1-R4 are the left/right leg segments - print the full L1-L4 and R1-R4 set twice (4 legs / 8 leg assemblies total). Frame & bottom cover (Internal-Frame, Bottom-Cover) print x1 each. Top cover: the included Top-Cover-Enclosed (stubby ears, as shown in the photos) is the default. Alternate covers - Cat (sharp cat ears) and No-Ears (a template for designing your own ears) - are available upstream and can be swapped in if preferred. Optional: the two magnetic hats (chainsaw, modular-mount) are cosmetic add-ons that clip onto the cover magnet slots - print only if you want them. Capabilities 8-DOF quadruped locomotion (walk, trot, wave, dance, point, rest, sit) 128×64 OLED face synced to movement WiFi web UI + JSON REST API — control from any browser Serial CLI for direct command input Pre-programmed emotes via Sesame Studio animation composer Sesame Simulator — Rust/URDF web 3D sim for previewing poses offline Prerequisites Basic soldering (hand-soldered wiring harness — see Wiring Guide) Access to an FDM printer Arduino IDE familiarity for firmware flashing Build Gotchas > Use the Lolin S2 Mini (or Sesame Distro Board V3) — not a bare ESP32-DevKit. The upstream firmware targets the S2 Mini pinout specifically. > Power supply matters: use a genuine 5V/3A regulated supply. Underpowered supplies cause brownouts under load. > Print in PLA with minimal supports. The STL set is tuned for FDM; no ABS/PETG required. Metadata GitHub: dorianborian/sesame-robot (1,619 ★) License: Apache 2.0 Author: Dorian Todd (Dorian Borian) Difficulty: Intermediate Links GitHub Repository BOM Printing Guide Build Guide Wiring Guide Firmware · Firmware Docs Sesame Studio (animation composer) Sesame Simulator (Rust/URDF web sim) Companion App Launch video (YouTube)

from $85🛠 BoM Humanoids

HumanoidsReachy Mini

Expressive open-source robot for hackers and AI builders

from $299🛠 BoM Arm Robots

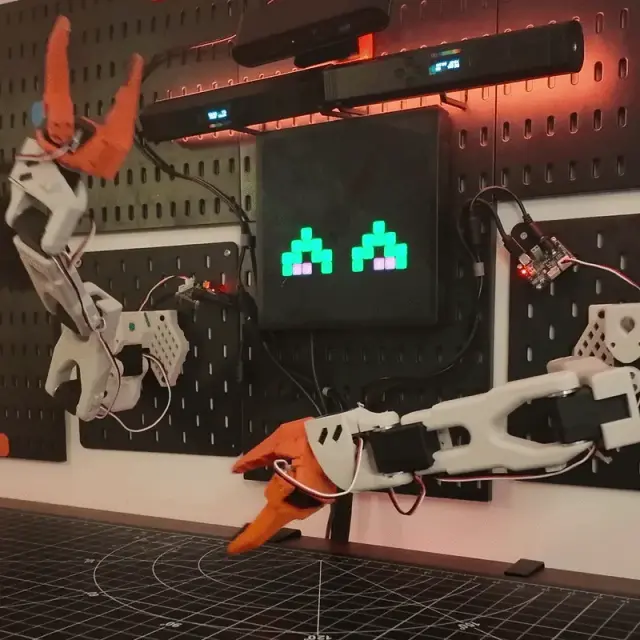

Arm RobotsSO-101 Teleop Arm

SO-101 is the current-generation open-source 6-DOF teleoperated robotic arm from The Robot Studio, designed in collaboration with Hugging Face's LeRobot project. It is the successor to the SO-100 and is one of the most widely built low-cost research arms in the world, used by hundreds of researchers and hobbyists as a platform for imitation learning, robot learning datasets, and end-to-end AI for manipulation. The design is a leader/follower pair: the human operator back-drives the leader arm by hand, and the follower mirrors the motion to manipulate objects. Both arms share the same 6-DOF serial kinematic structure (base rotation, shoulder, elbow, wrist pitch, wrist roll, gripper) with one STS3215 smart servo per joint. The total bill of materials is under $120 per arm including the parallel-jaw gripper, making it dramatically more accessible than conventional research arms. SO-101 improves on SO-100 with cleaner wiring channels, easier assembly (no gear disassembly required for installation), and updated motors on the leader arm for improved back-driveability. The design is fully parametric with print-orientation guides provided for both Ender and Prusa workflows. All CAD, STLs, firmware, and assembly instructions are Apache 2.0 licensed. The arm plugs directly into the Hugging Face LeRobot library for data collection, policy training (ACT, diffusion policy, VQ-BeT), and rollout — you can record teleop demonstrations, train a neural policy, and run autonomous manipulation on the same hardware. This program exposes cloud-side endpoints for homing, gripper control, recorded trajectory replay, and a mirror-leader hook for live teleop relay. Credit to The Robot Studio (therobotstudio.com) and the Hugging Face LeRobot team — upstream repository at https://github.com/TheRobotStudio/SO-ARM100 under Apache 2.0. Assembly guide at https://huggingface.co/docs/lerobot/so101. Printing The Print All set builds the complete leader + follower pair (print one of each file). The two arms share most parts but differ at the wrist/gripper — both variants are included on purpose: Shared by both arms: , , , , , , , . Follower-only (the gripper): , . Leader-only (the hand-drive handle): , , . Every arm uses one STS3215 smart servo per joint (6 per arm, 12 total). No optional or alternate parts — nothing to deselect. Build Guide Official SO-101 / SO-ARM100 assembly guide (covers both leader and follower): huggingface.co/docs/lerobot/so101

from $318🛠 BoM Mobile Robots

Mobile RobotsSpotMicro ESP32

377 stars on GitHub · michaelkubina/SpotMicroESP32 SpotMicroESP32 is Michael Kubina's redesign of the SpotMicro quadruped, derived from KDY0523's original Thingiverse design, optimized for support-free 3D-printing and built around an ESP32-DevKitC. 12-DOF (3 servos per leg). Source: https://github.com/michaelkubina/SpotMicroESP32 SpotMicro family — pick your compute target: | Variant | Controller | ROS | |---------|-----------|-----| | SpotMicro (Pi) — mike4192 | Raspberry Pi | ROS Kinetic | | SpotMicro Jetson Nano | Jetson Nano | ROS Melodic | | SpotMicro ESP32 (this) | ESP32-DevKitC | No ROS | Hardware: 12 servos (3 per leg: shoulder yaw, upper, lower) ESP32-DevKitC main controller Optional ESP32-CAM for vision LiPo battery with custom mounting brackets Software ecosystem (community forks): Maarten Weyn BLE/IK firmware: https://github.com/maartenweyn/SpotMicroESP32 Blacksheep Nitro Fork (PCB + walking gait + RC): https://github.com/Blacksheep909/SpotMicroESP32-Nitro-Fork SpotMicro-Leika (FreeRTOS + 2 gaits): https://github.com/runeharlyk/SpotMicroESP32-Leika SpotMicroAI Community: https://spotmicroai.readthedocs.io/ Resources: Thingiverse: https://www.thingiverse.com/thing:4559827 Original SpotMicro by KDY0523: https://www.thingiverse.com/thing:3445283 Printing SpotMicro is a 12-DOF quadruped — 4 legs, 3 servos each — so the shoulder and limb parts must be printed once per leg (×4), and the chassis side is printed as a left/right pair (×2). This is the support-free Kubina redesign, so no supports are needed. Print quantities: ChassisSide ×2; FrontCover ×1, RearCover ×1, Cameramount ×1. Per leg (×4 each): BottomShoulder, InnerShoulder, OuterShoulder, LimbBallBearingMount, LimbBottomShell, LimbTopShell, LimbServohornMount, FootTip. Note on RearCover: the file is labelled a "Template." It is a customizable base cover meant to be edited (e.g. to add a cutout for your specific electronics/port layout) before printing, rather than a fixed final part — print it as-is if you don't need a custom opening. The upstream source also includes many experimental and alternate variants (different power-board mounting plates, optimized covers, mold parts); those are optional alternates and are intentionally excluded from this set, which is one clean buildable SpotMicro.

from $130🛠 BoM Mobile Robots

Mobile RobotsXLeRobot Dual-Arm Mobile Home Robot

Low-cost dual-arm mobile robot for embodied AI and household manipulation

from $660🛠 BoM Humanoids

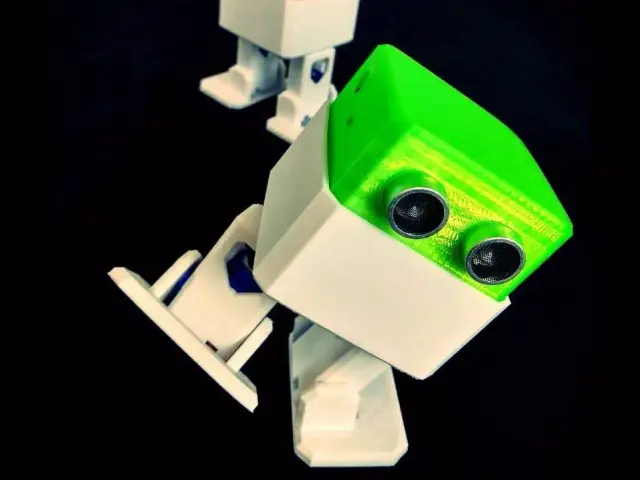

HumanoidsOpen Duck Mini

2,642 stars on GitHub. Open Duck Mini — Bipedal BDX Droid Replica Source: https://github.com/apirrone/OpenDuckMini A miniature bipedal character robot inspired by Disney's BDX droid (the little star of the Galactic Starcruiser walkabout experience and several Disney+ trailers). Designed by Antoine Pirrone (apirrone), Open Duck Mini scales that silhouette down to about 35 cm tall. With ~$1,400+ in parts (driven largely by 10 Dynamixel XC330-M288-T servos), this is a serious RL-trained biped build — not a budget project. What it is A walking, head-tilting, antenna-twitching little biped with serious character. Under the cute exterior it's a legitimate RL-trained biped: Legs trained in Isaac Gym, sim-to-real transferred to physical hardware Standing-up-from-fall policy with demonstrated perturbation robustness Sim2Sim pipeline through MuJoCo for validation before hardware deployment Head + antennas add expressiveness on top of the locomotion stack Hardware Servos: Dynamixel XC330-M288-T across all joints (upgraded from XL330 for torque headroom on landing impacts) IMU: BNO055 9-axis for base orientation estimate Compute: Raspberry Pi Zero 2W running the learned policy Battery: small LiPo in the cage, hot-swappable What you can do with it Run the pretrained walking policy straight from the repo — step the robot around, send it forward/turn commands over the orobot cloud surface. Collect your own episodes — use the remote surface here as the operator input for demos. Research biped RL — swap in your own PPO / SAC training runs; the MuJoCo and Isaac scenes are all published. Character animation — antenna and head servos give it genuine personality for demos, events, or as a companion prop. Printed parts ~130 STLs upstream (incl. wiring routing, battery variants, community mods); canonical printable set is in Print Files below. See also the upstream [](https://github.com/apirrone/OpenDuckMini/tree/main/print/mods) tree for Jaime's v2 mods and Justin's "Park Head" variant. Print quantities & material notes: Print x1 of each file. The two antennas use a mirrored pair — and (both required). The foot sole comes in two materials: print ** for grip (recommended) or if you don't have TPU — you only need one of the two per foot. Left/right leg parts (, ) are true mirrors, not interchangeable. Attribution & license Designer: Antoine Pirrone (apirrone) Upstream: https://github.com/apirrone/OpenDuckMini License: Apache 2.0 Links Upstream: https://github.com/apirrone/OpenDuckMini Training code: https://github.com/apirrone/OpenDuckMiniRuntime BDX inspiration: Disney's Galactic Starcruiser droid --- Install Notes The orobot action runs the MuJoCo simulation training script (), not the physical robot deployment. The actual walking firmware for the physical duck lives in a separate repository: apirrone/OpenDuckMiniRuntime. To run on hardware, clone the Runtime repo separately, deploy to a Raspberry Pi Zero 2W, and update the action in this Program's editor to point at the Runtime's main script.

from $1400🛠 BoM Other Robots

Other RobotsStringman

Room scale cable driven parallel robot

from $210🛠 BoM Humanoids

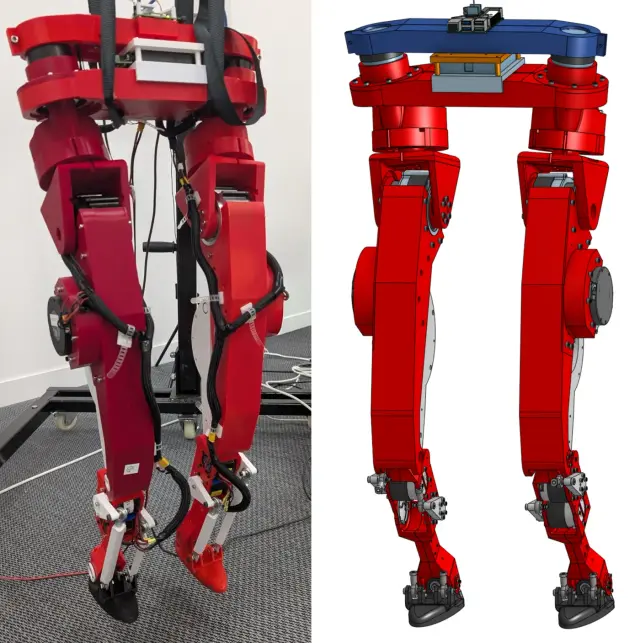

HumanoidsLeRobot Humanoid (Biped Platform)

The LeRobot Humanoid (Biped Platform) is a fully open-source, 3D-printed humanoid biped robot developed by Virgile Batto and the Hugging Face LeRobot team. Powered by a Raspberry Pi 5 and 12 RobStride CAN-FD actuators arranged across two legs (6 degrees of freedom per leg, 12 DOF total), it represents a serious open-hardware effort to make capable humanoid locomotion accessible to researchers and advanced builders worldwide. Every mechanical, electrical, and firmware artifact is freely available under the Apache 2.0 license, from the Onshape CAD source to the bill of materials to the motor commissioning scripts. Creator & License Author: Virgile Batto (Hugging Face LeRobot team) License: Apache 2.0 Hardware repository (CAD, BOM, assembly docs, STLs): https://github.com/Virgileboat/lerobot-humanoid-hardware Runtime repository (motor control, gait, model inference): https://github.com/Virgileboat/lerobot-humanoid-runtime Public Onshape CAD document (start here for design exploration): https://cad.onshape.com/documents/fb645318a27646d1d8840be6/w/d1cae8805fb652b4d1614997/e/804a1da43f242001a05129b4 All build assets — STL files, BOM CSVs, wiring diagrams, motor commissioning scripts — are republished here under the same Apache 2.0 terms. Printing The Print All set is complete — all 47 unique STLs for one full biped. Both legs are present as proper left/right mirror pairs ( = left, = right). There are no missing parts and no alternates to deselect. The build uses ~75 printed pieces from these 47 files because several parts repeat. Until per-file print-multiples are supported, print the quantities below: Print x4 each (bearing spacers, shared across both legs): , , , Print x2 each (repeated per leg / both u-joints): , , , , , , , , , , , Print x1: every other file (the remaining torso, hip, femur and tibia parts — each left/right mirror is its own file). Total: 47 files → ~75 physical parts (3–4 kg PLA+). Left and right parts are true mirrors and not interchangeable. Hardware Requirements This robot is BYOD (Bring Your Own Device). The orobot-firmware layer does not currently support RobStride CAN-FD actuators; all joint control runs directly on the Raspberry Pi through the lerobot-humanoid-runtime repository. The orobot program record here serves as a build reference, asset hub, and control-interface stub. Required hardware: Raspberry Pi 5 (8 GB recommended) — primary compute SAVVYCANFD 2CH CAN-FD adapter (USB, dual channel, 12 Mbps max) — connects Pi to motor bus 12 × RobStride actuators: 2 × RobStride O0 (torso/hip yaw) 2 × RobStride O2 (hip Z) 4 × RobStride O3 (thigh) 4 × RobStride O5 (shin) IMU: BNO055 or BNO085 breakout board Mechanical: ~75 custom 3D-printed PLA+ parts, plus precision bearings and fasteners per BOM Specifications | Parameter | Value | |-----------|-------| | Degrees of freedom | 12 (6 per leg) | | Actuators | 12 × RobStride (CAN-FD) | | Estimated print weight | ~3–4 kg PLA+ | | Estimated total cost | ~$2,636 USD | | Skill level | Advanced | | Build time | Multi-week (motor commissioning → print → assembly → wiring → bring-up) | | CAD source | Onshape (public, link above) | | Control protocol | RobStride CAN-FD over USB adapter | Build Start Sequence Order long-lead items (motors, bearings, shoulder screws from ) first — they can take weeks. Print STLs per (~3–4 kg PLA+; orientation matters for structural leg parts). Commission and ID every motor before any mechanical assembly — protocol or ID errors after assembly require disassembling the leg to fix. Full step-by-step at lerobot-humanoid-hardware. Safety > High-torque actuators — a physical E-stop is required and must be accessible at all times during powered operation. See for the full bring-up checklist. Attribution This program page was created by the orobot BROKER-2 index to help builders find and start this project. All design credit goes to Virgile Batto and the Hugging Face LeRobot team. Please star the source repos if this build helps your project.

from $2600🛠 BoM 3D Printers

3D PrintersVoron 0.2

Voron Zero is a compact, fully-enclosed CoreXY 3D printer designed for high-speed, high-quality printing in a small footprint. The V0.2 revision is the official release maintained by Voron Design. Highlights 120 × 120 × 120 mm build volume CoreXY motion system Low-mass direct-drive extruder (Mini Stealthburner) Fully enclosed chamber 24V DC heated bed Klipper firmware Filament runout sensor Sensorless homing What's in this program Full STL set for the V0.2r1 revision (134 printed parts) Pointer to the official assembly manual PDF Klipper firmware configuration profile BOM via the Voron Configurator Build notes Most first-timers buy a complete kit rather than sourcing à-la-carte — LDO (~$800), Formbot (~$550), and Fysetc are the common choices. À-la-carte Misumi sourcing runs $400–600 but requires more lead time. Use the Voron Configurator to generate an accurate BOM for your region. The Voron community provides an interactive Printed Parts Guide and an active Discord for build support. Expect a multi-weekend build for first-timers. Links Voron-0 GitHub (STLs + manual) Official Assembly Manual PDF Voron Configurator (authoritative BOM) Printed Parts Guide Voron Discord Klipper firmware docs Source: https://github.com/VoronDesign/Voron-0 (GPL-3.0)

from $550🛠 BoM Humanoids

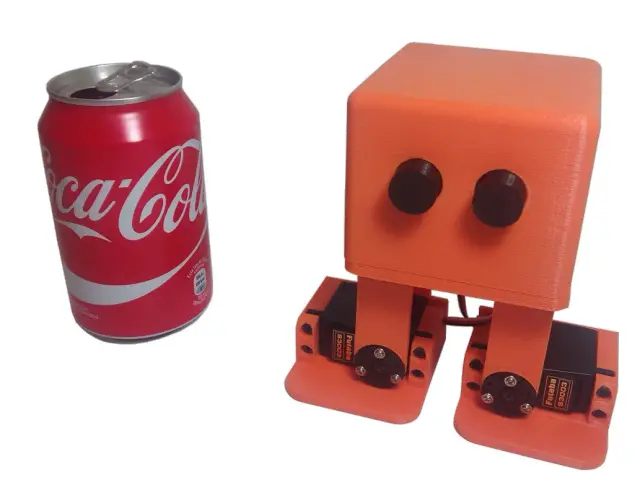

HumanoidsStack-chan

1,382 stars on GitHub. Stack-chan — Palm-Sized Companion Robot Stack-chan is a palm-sized, open-source companion robot driven by an M5Stack microcontroller and JavaScript firmware. Created by Shinya Ishikawa, Stack-chan sits on your desk, turns its head to watch you, expresses emotions on its built-in display, and responds through speech. Source: https://github.com/stack-chan/stack-chan Capabilities Servo-driven head gaze/tracking Emotive faces (happy, angry, sad) + fully customizable face expressions Speech synthesis Composable behaviors ("mods") — mix and layer: expressions, tracking, speech, M5Unit addon support Programmable in JavaScript on the Moddable SDK (embedded JS framework; no C/Arduino required) Servo Paths — Pick Before You Print Two mutually exclusive configurations that change both the case geometry and electronics: | Path | Servos | Notes | |------|--------|-------| | PWM | SG90 / MG90S | Simpler, cheaper, standard hobby servo wiring | | Serial TTL | RS30X series | Smoother motion, requires a buffer IC and serial-servo case geometry | Picking a path determines which STL set and which PCB you build. Build Notes Requires a custom PCB — order Gerbers from JLCPCB/PCBWay ( directory in the repo) Modular 46-part printable enclosure (shell, bracket, feet, spacer, accessories: hat, backpack variants, Lego adapter) An official commercial M5Stack version (StackChan) is available; the open-source org fork is the reference for DIY Metadata GitHub stars: 1,382 Author: Shinya Ishikawa & community License: Apache 2.0 Links Firmware () STLs () Schematics + PCB () Roadmap Demo video (YouTube, EN subs)

from $130🛠 BoM Humanoids

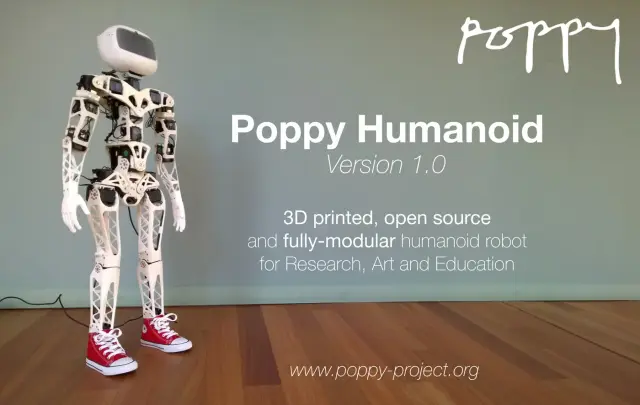

HumanoidsPoppy Humanoid

891 stars on GitHub. Poppy Humanoid is an open-source, 3D-printed, 25-DOF humanoid robot from the Poppy Project, originally developed at Inria's Flowers team for research in embodied cognition, human-robot interaction, and robot learning. Standing 84 cm tall and weighing about 3.5 kg, Poppy is designed with biomechanically motivated bent-thigh proportions. Source: https://github.com/poppy-project/poppy-humanoid The robot uses 25× Dynamixel smart servos on a daisy-chained TTL bus. A Raspberry Pi onboard provides high-level control via pypot (the Python Dynamixel library developed for this project), with real-time joint targets streamed from user code. Compliant mode lets you back-drive the robot by hand to teach poses and trajectories — a powerful pedagogical and research primitive. Poppy Humanoid has been used in dozens of published studies on bipedal walking, imitation learning, developmental robotics, and tutor-assisted programming education. Poppy is a modular family: the Torso variant is a desk-mountable upper body, and the Ergo Jr is a 6-DOF desk arm. Both share the Dynamixel bus stack and the pypot API. This means behaviors developed for one Poppy robot often port directly to another with configuration-level changes rather than code rewrites. Hardware interface: USB-to-Dynamixel adapter (U2D2 or USB2AX) connected to the Raspberry Pi. No Arduino required. This program exposes cloud-side endpoints for rest/sit postures, arm waving, compliant-mode toggle, dance primitive playback, and full demonstration record/replay. The Raspberry Pi onboard bridges the orobot cloud to the Dynamixel bus via pypot. Credit to the Poppy Project community and Inria Flowers team (poppy-project.org) — upstream repository at https://github.com/poppy-project/poppy-humanoid. Hardware under Creative Commons Attribution-ShareAlike 4.0 (hardware/LICENSE.md), software under GPLv3 (software/LICENSE). Share-alike requires derivative hardware designs to be published under the same CC-BY-SA-4.0 license. Build Guide Official assembly guide: docs.poppy-project.org Printing This file set contains the structural body meshes for the Poppy Humanoid (head, chest, abdomen, bust/abs/hip motor brackets, and the limb segments: shoulder, forearm, hip, thigh, shin, foot). Poppy is a left/right symmetric biped, so the limb parts are mirror pairs — each l part has a matching r part, and both must be printed (they are not alternates). Important note on completeness: the current list is the curated set of printable body meshes, but it is not yet the full humanoid. Several right-side mirror parts (rshoulder, rhip, rhipmotor, rthigh, rfoot, rshouldermotor) are not present in this list and must be printed as mirrors of their left-side counterparts. The complete, official, print-ready STL package for the full ~25-DOF Poppy Humanoid is distributed by the upstream project as the "STL3Dprintedparts.zip" asset attached to the poppy-project/poppy-humanoid GitHub releases; builders should use that archive for the definitive, complete part list and quantities. Note on file naming: these are the "visual" geometry meshes from Poppy's URDF model. The companion "_respondable" meshes that appear in the source repository are simplified collision hulls used only for physics simulation and should NOT be printed — they have been excluded from this Print All set.

from $1050🛠 BoM Mobile Robots

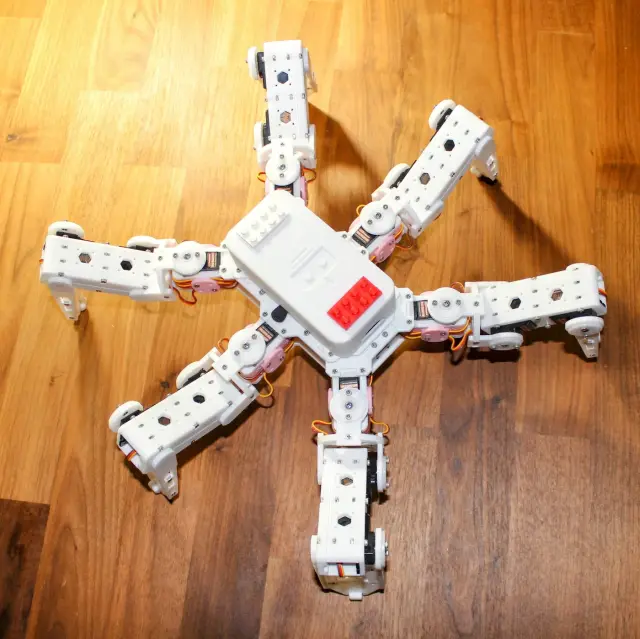

Mobile RobotsHexapod

Hexapod — 3D Printed Six-Legged Walking Robot A fully 3D-printed hexapod robot with 18 servo motors (three per leg) providing lifelike, agile locomotion. Designed by rookidroid.com, this project uses either an ESP32 or Raspberry Pi Pico W/2W controller board with built-in WiFi for wireless remote control. The firmware supports over-the-air (OTA) updates so you can iterate on motion patterns without touching the hardware. Hexapod v2 is the recommended build. The original v1 used MG90S servos which are prone to failure; v2 upgrades to stronger 21G DS Power/Miuzei servos and is significantly more reliable. Do not use MG90S. Note: The controller board is proprietary to rookidroid.com. A generic ESP32 dev board can substitute — check the firmware docs for pin mapping. Specifications | Property | Value | |----------|-------| | Legs | 6 | | Servos | 18 x 21G (3 per leg: hip, knee, ankle) | | Controller | ESP32 or Raspberry Pi Pico W/2W | | Communication | WiFi (UDP port 1234) + OTA updates | | Power | 2 x 18650 Li-ion cells | | Printed Parts | 20 STLs, all print without supports | | Print time | ~40–60 hours total | | Skill level | Intermediate | Motion Modes The ESP32 firmware implements a pre-computed look-up-table gait system with 18 motion modes including: directional walking at 0, 45, 90, 135 degrees (left and right variants), 180 degrees; fast forward and backward; turn left and right; climb forward and backward; body rotations on X, Y, Z axes; and a twist mode. Attribution Creator: rookidroid.com Source: https://github.com/rookidroid/hexapod License: GNU GPL v3 Printing This is a complete, modular set of 20 unique parts. Because the hexapod has 6 identical legs (each with 3 joints), many parts must be printed in multiples. Per the upstream build guide, print the following quantities: Body (print once each): bodybase ×1, bodytop ×1, bodytopcover ×1, bodybattery ×1. Body (print in pairs): bodyside ×2, bodyfrontback ×2. Servo brackets (one per leg): bodyservoside1 ×6, bodyservoside2 ×6, bodyservotop ×6. Legs and joints (per-leg multiples): jointbottom ×12, jointtop ×12, jointcross ×6, legbottom ×6, legtop ×6, legside ×12. Feet (one set per leg): footbottom ×6, foottop ×6, footground ×6, foottip ×6. Optional: accessorycableholder ×1 (a cable-management add-on, not required for the robot to function). No supports are needed — orient each part as shown in the upstream print thumbnails. All 20 files are the correct, current parts; there are no duplicates, alternates, or version variants to choose between.

from $120🛠 BoM Mobile Robots

Mobile RobotsKame32

Kame32 is an open-source quadruped walking robot by JavierIH. Built around an ESP32 and 8 servo motors, it walks, runs, dances, and performs a rich library of quadruped gaits — all controlled wirelessly via a built-in web-based gamepad over Wi-Fi. All structural parts are 3D-printable. A custom PCB (KiCAD gerbers included) centralizes servo wiring. Choose MG90S (higher torque) or SG90 servos — brackets for both variants are included. Specifications | Property | Value | |----------|-------| | Motors | 8 servos (MG90S or SG90) | | DOF | 8 (2 per leg) | | Controller | ESP32 Dev Kit | | Control | Web gamepad via Wi-Fi | | PCB | Custom KiCAD design (gerbers included) | | CAD | FreeCAD source file | | Build time | 1–2 weekends | | Skill level | Intermediate | Gaits Walk · Backward · Run · Omni Walk · Turn Left · Turn Right · Moonwalk · Dance · Up/Down · Push Up · Hello · Jump · Home All gaits use the Octosnake oscillator library — sinusoidal servo control with per-axis phase offsets. Hardware ESP32 drives 8 PWM servos at 50 Hz / 16-bit resolution. Per-servo calibration offsets stored in ESP32 NVS. Firmware PlatformIO / Arduino. Build environments: calibration (tune offsets) and gamepad (web UI controller). Attribution Creator: JavierIH Source: github.com/JavierIH/Kame32 License: CC BY-SA 4.0 (hardware) - GPL-3.0 (code) Build Guide Source repository with CAD, firmware, and assembly files: github.com/JavierIH/Kame32 Printing Kame32 is an 8-servo quadruped — 2 servos per leg, 4 legs. The servo brackets and legs come in two versions matched to the servo you use: SG90 (plastic-gear) or MG90S (metal-gear). This Print All set uses the SG90 version, which is the default servo in the bill of materials; MG90S is listed there only as an optional higher-torque upgrade. The four MG90S-specific files have been removed so the list yields one buildable robot rather than two overlapping servo sets. Print quantities: print body-box and body-cover once each (the chassis). The leg and bracket parts are per-leg, so the left/right SG90 leg and bracket files are each printed in multiples to cover all four legs (the robot has 4 legs, each with a left and right side); print the shared leg-link, bushing, and foot parts in matching multiples. Build one full set of leg hardware per leg from the mirrored left/right SG90 parts. Note: if you are building with MG90S metal-gear servos instead, use the corresponding -mg90s bracket and leg files from the upstream source (github.com/JavierIH/Kame32) in place of the -sg90 parts.

from $50🛠 BoM Mobile Robots

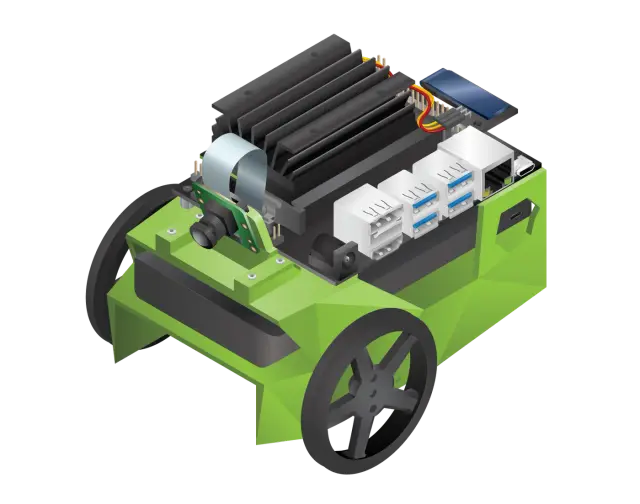

Mobile RobotsOpenBot

3,262 stars on GitHub · MIT · Intel Labs + TU Munich OpenBot turns your smartphone into the brain of a low-cost robot — anyone with an Android or iOS device and ~$50 budget can build a capable AI-powered robot. Source: https://github.com/isl-org/OpenBot The robot body is a 3D-printed differential-drive chassis holding two gear motors, a speed controller, and a custom PCB — all controlled by an Arduino Nano. The smartphone docks on top, providing the camera, CPU, and network stack. Your phone's neural engine runs person-following, autonomous navigation, and custom AI policies via the companion app. 4 body variants + phone mount — regular (two-part top/bottom), block (PCB stack), glue (no-screw assembly), slim (narrow). See Print Files for the full set. The project also supports tank, MTV off-road, and RTR RC-chassis variants. --- Install Notes OpenBot's intelligence lives in the Android/iOS app — vision, navigation, data collection, and AI inference all run on the phone. The orobot device code only bridges the Arduino Nano motor controller layer (forward/backward/turn via serial JSON). Higher-level behaviors (person following, autopilot, data recording) require the OpenBot app connected to the Arduino via USB OTG cable. The orobot integration is useful for basic motor testing and manual drive, but does not replicate the full OpenBot feature set.

from $50🛠 BoM Mobile Robots

Mobile RobotsYertle Quadruped

Yertle — A 3D Printed Quadrupedal Robot for Locomotion Research Yertle is a 12-DOF quadruped robot designed for locomotion research. It fuses the leg geometry of the Kangal quadruped with the body geometry of SpotMicro, making most parts cross-compatible with the SpotMicro ecosystem. Creator: Jerome Alexander Graves · License: MIT · Status: Work-in-progress (functional; ROS2 integration pending) This Program is a learning entry point. The original firmware is C++ on an ESP32 with a Python GUI master controller — the orobot Program here exposes a stubbed JavaScript interface so you can explore the gait/command surface in-browser. To run on real hardware, follow the upstream build and flash instructions. Mechanical 4 legs × 3 DOF (hip yaw + hip pitch + knee) = 12 servos total Leg extension: ~20 cm Mass: ~1.8 kg Frame: PLA or ABS, printable on a 150 × 150 mm bed (Ender 3 Pro tested) Print time: ~2 weeks (5–10 h/day on a single Ender 3 Pro) 3D-Printed Parts This is the complete set of 21 unique parts: the four-piece outer Shell (Top, Bottom, Front, Back), the Frame (shoulder frames, servo mounts, body beams, electronics plate), and the Legs. Yertle is a 4-legged, 12-servo (3-DOF-per-leg) quadruped, so the leg and shoulder parts must be printed in multiples. Recommended material: PLA or ABS; a build plate of at least 150mm is needed for the larger parts. Print quantities (per the upstream build guide): Shell — Top Shell ×1, Bottom Shell ×1, Front Shell ×1, Back Shell ×1 (print these in a second color if you want a two-tone body). Frame — Inner Shoulder Frame ×2, Outer Shoulder Frame ×2, Upper Shoulder Frame ×2, Lower Shoulder Frame ×2, Left Servo Mount ×2, Right Servo Mount ×2, Servo Mount Top Bracket ×4, Side Body Beam ×2, Electronics Mounting Plate ×1. Legs (one set per leg, ×4 legs) — Femur ×4, Femur Servo Connector ×4, Inner Tibia ×4, Outer Tibia ×4, Short Link ×4, Long Link ×4, Left Shoulder ×2, Right Shoulder ×2. Software Architecture Master/slave over serial or UDP/WiFi: Slave (ESP32, C++/Arduino): servo control, sensor read, inverse kinematics, safety limits. Master (Python 3 GUI): gait generation, sensor fusion, ROS2 (todo). Runs on anything with WiFi + screen + Python 3. Simulation: Python-based built-in simulator; URDF available for Gazebo/Unity. Build Cost ~$315–350 total (servos dominate at ~$200). See full BOM in the upstream Design/README. Hardware Compatibility (BYOD) Yertle is not an orobot-firmware-native build. It uses a custom ESP32 firmware. Running the orobot Program against real hardware requires bridging the orobot WebSocket protocol to Yertle's UDP master/slave protocol — a custom integration tracked under the orobot ESP32 BYOD effort. Inspirations Kangal (leg design) SpotMicro (body geometry, parts compatibility) Open Quadruped Links GitHub Repository STL Files Design/README & BOM ESP32 Firmware Python Master GUI URDF / Simulation --- Extracted from commit on 2026-04-27.

from $350🛠 BoM Home Automation

Home AutomationDIY SmartLock

DIY SmartLock A battery-powered 3D-printed smart lock powered by an ESP32 and driven by an N20 geared motor. Designed for residential door locks, it replaces the manual key turn with touch-triggered automation — touching the metal door knob from outside wakes the ESP32 from deep sleep and checks MQTT for authorization before unlocking. How It Works The ESP32 spends nearly all of its time in deep sleep (as low as 5.2 µA on bare ESP32), waking only when the capacitive touch sensor detects contact with the door knob. On wake it connects to WiFi, subscribes to an MQTT topic (door/auth), and drives the N20 motor clockwise or counter-clockwise to lock or unlock. The system integrates naturally with Node-RED, Home Assistant, or any MQTT-capable automation stack for presence-based auto-auth. Important: The N20 motor must operate at 9 V — 3–6 V versions will not reliably actuate the door trap. Specifications | Property | Value | |---|---| | Controller | ESP32 (bare WROOM-32 or LOLIN D32) | | Motor | N20 geared DC motor (9 V, ~40 mA no-load) | | Motor Driver | TB6612FNG (direct GPIO control) | | Power — Logic | 2x AA alkaline batteries (~3.2 V) | | Power — Motor | 9 V block battery | | Deep Sleep Current | ~5.2 µA (bare ESP32), 125 µA (LOLIN D32) | | Wake Trigger | Capacitive touch sensor (ESP32 pin T2) | | Connectivity | WiFi 802.11 b/g/n + MQTT | | Printed Parts | 4 STLs (base, gear-motor, cage-motor, gear-key-knob) | | Build Difficulty | Intermediate | | Firmware | Arduino / PlatformIO | Attribution Designer: Florian Vogler (@vogler on GitHub) Source: https://github.com/vogler/SmartLock License: Source-available (no explicit open-source license — check repo for usage terms) 3D Model: https://a360.co/4lLHHwa (Fusion 360, downloadable in multiple formats) Build Album: https://photos.app.goo.gl/bewiZ1qH8sHnJjmg7 Printing Print one of each of the four parts: 1-base (the mounting base), 2-gear-motor (motor drive gear), 3-cage-motor (motor cage/housing), and 4-gear-key-knob (the key knob gear). Together these make one complete SmartLock mechanism. Note: the upstream source also includes a file named 0-SmartLock-assembled, which is a fully assembled preview model of the whole lock — it is a visual reference for how the parts fit together, not a printable part. It has been excluded from this Print All set so the list yields exactly the parts you need to build the lock.

from $37🛠 BoM Mobile

MobileOtto DIY

Otto DIY — Bipedal Walker Robot Otto is one of the most beloved open-source DIY robots: a small bipedal walker that anyone can build with a 3D printer, an Arduino Nano, and four micro servos. Originally created by the Otto DIY community, Otto can walk, turn, dance, sing, and emote with optional ultrasonic, sound, and LED matrix add-ons. This Program is a learning interface for the Otto DIY platform. Full hardware control runs on the Arduino firmware in the source repo below. The orobot.io control sandbox lets you experiment with the command surface before wiring it into your own Otto. Specs | Property | Value | |----------|-------| | Type | Bipedal walker | | Servos | 4 × SG90 micro servo (LeftLeg, RightLeg, LeftFoot, RightFoot) | | Controller | Arduino Nano (also Uno, Micro, Mega, ESP8266, ESP32 in dev) | | Height | ~12 cm | | Estimated cost | $50–75 (core build) / $80–110 (with sensors) | | Estimated build time | 2–6 hours | | Skill level | Beginner / Intermediate | Source Repo: https://github.com/OttoDIY/OttoDIYLib (canonical Arduino library, v13.0) STLs + assembly guide: https://www.ottodiy.com/ Library examples: https://github.com/OttoDIY/OttoDIYLib/tree/master/examples Otto Blockly / app: https://www.ottodiy.com/#app Author: Otto DIY community License: GPL v3 (code) + CC-BY-SA 4.0 (mechanical design) Hardware integration status Otto runs on Arduino Nano with the OttoDIYLib firmware. orobot-firmware does not yet have Arduino Nano support — this Program provides the learning interface and command surface. To run a real Otto, flash OttoDIYLib onto your Arduino directly and use the bundled examples (, ). Capabilities Walk, turn, jump, moonwalk dance, ~10 named gestures (Happy, Sad, Angry, Love, Confused, Wave, Magic, Fail, Sleeping…), and 19 built-in songs. Full API in the OttoDIYLib examples. Credits Massive thanks to @JavierIH, @Obijuan, @sfranzyshen, and the dozens of contributors who have built and maintained Otto DIY for nearly a decade. Otto is one of the projects that proved tiny, friendly, accessible robots could be a global open-hardware movement.

from $64🛠 BoM Humanoid

HumanoidZowi Biped

163 stars on GitHub · CC-BY-SA 4.0 · bq / JavierIH Zowi is the official open-source biped from bq (sponsored until 2016) — a compact 4-servo educational walking robot, descended from the Otto/BoB Thingiverse lineage (original concept by k120189, Thingiverse 43708). Designed entirely in FreeCAD so every part is editable and remixable. Licensed CC-BY-SA 4.0. Source: https://github.com/JavierIH/zowi Hardware: 4 Futaba S3003 (or compatible) servos BQ ZUM BT328 or Arduino-compatible board 4xAAA battery holder The repository ships the canonical body/chassis/leg/foot STLs plus a deep mods folder with remix variants: Forge, IronZowi, JIM, Kobuki, MicroRaider, Scopum, Zowarrior, Zowimanoid, Zowiquilator. Also includes Arduino code, schematics, and Spanish-language docs. Resources: Original Thingiverse concept: http://www.thingiverse.com/thing:43708 DIWO blog (Spanish): http://diwo.bq.com/zowi-introduccion-a-los-robots-bipedos/ License: CC-BY-SA 4.0. Printing Zowi is a 4-servo biped (Otto-family). The complete printed set is just five parts: body, chassis, leg, footL, and footR. Print one of each. footL and footR are a mirror pair (left and right feet) and are both required — they are not alternates. The LED-matrix mouth and ultrasonic/sensor modules are off-the-shelf electronics and are not printed. This Print All list was cleaned up to contain only the canonical Zowi parts. The upstream source repository also hosts several community remixes/mods (e.g. Forge, IronZowi, JIM) with their own arms, hands, heads, shields, and full-body redesigns; those are separate alternate builds, not part of the stock 4-servo Zowi, and have been removed along with a set of duplicate re-imported core files so the list yields exactly one buildable robot.

from $70🛠 BoM Humanoids

HumanoidsModular Biped

467 stars on GitHub · MIT · MakerForge Tech / Aaron Mason The Modular Bipedal Robot is an open-source 3D-printable companion robot from MakerForge Tech / Aaron Mason, built around a Raspberry Pi + Arduino Pro Mini split. The repo includes the full STL set for the v2 body, head, neck, and legs, plus a modular Python/C++ software framework with drop-in modules. Source: https://github.com/makerforgetech/modular-biped Architecture: Raspberry Pi (Pi 4 or Pi 5) handles vision, speech, and orchestration Arduino Pro Mini handles real-time servo control via serial Custom PCBs for power and IO IMX500 AI camera module supported Software modules (drop-in): Vision/tracking: face/object detection, motion detection Speech/TTS: text-to-speech, Braillespeak LLM/translation: ChatGPT chat, language translation Peripherals: NeoPixel LEDs, buzzer, audio Connectivity: RTL-SDR radio, Viam integration, serial bus Build resources: Wiki & build guide: https://github.com/makerforgetech/modular-biped/wiki 3D-print files: https://github.com/makerforgetech/modular-biped/tree/main/3dprints/v2 Hardware list: https://github.com/makerforgetech/modular-biped/wiki/Hardware Software architecture: see Software Architecture.drawio.svg in repo License: MIT. Printing This is the coherent v2 print set. Modular Biped is an 8-servo bipedal robot (6 leg servos — hip/knee/ankle per side — plus 2 head servos). Print one of each part. The legs and feet are left/right mirror pairs (leftlegupper/rightlegupper, leftleglower/rightleglower, leftfoot/right_foot); both sides are required and are not alternates. The parts cover the skeleton, the front/back body case, the head (top, bottom, lid, visor), the neck/tilt mechanism, and the two legs with feet. Recommended print as drawn for the v2 design. Note: the upstream source includes a file named complete (with a matching complete preview image) — this is a full assembled model of the whole robot used for visualization, not an individual printable part. It has been removed from this Print All set so the list yields each part once rather than printing a fused copy of the entire robot.

from $260🛠 BoM Mobile Robots

Mobile RobotsUnderwater Drone

75 stars on GitHub. This open-source customisable underwater drone is a 3D-printable submersible robot designed by Guido and Fabio Schillaci at Humboldt-Universitat zu Berlin. Published as an arXiv preprint, the design enables multiple propeller and thruster configurations for varying research and exploration tasks. Source: https://github.com/guidoschillaci/underwater-drone The drone is built around the 4" watertight enclosure sold by BlueRobotics, providing a waterproof housing for electronics. Thrusters use brushless motors (see note below on component versions); all 18 structural components are 3D-printable and recommended to be printed with solid infill for watertight structural integrity. The modular clamp system allows configuring the drone for different mission profiles — forward-facing cameras, lateral thrusters, ballast placement, or instrument mounting. See Print Files for the full list of 18 printable components. Thruster note: the description references Turnigy Aerodrive DST (DST-700/DST-1200) brushless motors; some BOM links point to the ApisQueen 5060 Waterproof Brushless Underwater Motor instead. Verify which motor you are sourcing before purchase — the two are not the same form factor. Applications include aquatic research, underwater exploration, coral reef monitoring, and educational robotics. License: Creative Commons Attribution 4.0 International (CC-BY 4.0). --- Install Notes This repository contains CAD files and assembly instructions for a 3D-printable submersible — there is no firmware or control software included. The design is intended for custom electronics integration. The orobot Program code is a reference stub only. To build a controllable version, you will need to design or source your own motor controller and connect it via serial or WiFi. Build Guide 3D models, build instructions, and configuration details: github.com/guidoschillaci/underwater-drone --- Printing This Print All set maps 1:1 to the upstream folder — all 18 structural components, no sim meshes or duplicates. Print in PETG (hydrolysis-stable) with solid / near-100% infill for watertight structural integrity. Quantities (4-thruster configuration) The drone uses 4 thrusters, so thruster and propeller parts repeat: | Part | Qty | Notes | |------|-----|-------| | thrustermain.stl | 4 | One per thruster | | thrustercap.stl | 4 | One per thruster | | thrustermotormount.stl | 4 | One per thruster | | thrusterdst2bluerovadapter.stl | 4 | Motor-to-housing adapter (one per thruster) | | propeller.stl | 4 | One per thruster (or use motor-matched commercial props per BOM) | | clampanteriorsuperior.stl | 1 | Modular clamp | | clampanteriorinferior.stl | 1 | Modular clamp | | clampposteriorsuperior.stl | 1 | Modular clamp | | clampposteriorinferior.stl | 1 | Modular clamp | | posteriorclamp2tubeconnector.stl | 1 | Clamp-to-tube connector | | rearclampconnector.stl | 1 | Rear clamp connector | | adapter90.stl | 1+ | Tube adapter | | adapterroundmale.stl | 1+ | Tube adapter | | adapterroundfemale.stl | 1+ | Tube adapter | | adaptersplit.stl | 1+ | Tube adapter | | stick.stl | 1+ | Structural rod | | ballastcylinder.stl | 1+ | Ballast tube | | ballastcap.stl | 1+ | Ballast cap | Modular by design: the clamp/adapter/ballast system is meant to be reconfigured for different mission profiles (forward camera, lateral thrusters, ballast placement). Print extra adapters, sticks, and ballast cylinders to suit your chosen layout — the counts above are a baseline for a standard 4-thruster build. Motor note: the upstream design targets Turnigy Aerodrive DST motors (hence ); if you source the ApisQueen 5060 motor listed in some BOM links, the motor-mount fit differs — verify before printing the thruster mounts.

from $1050🛠 BoM Arm Robots

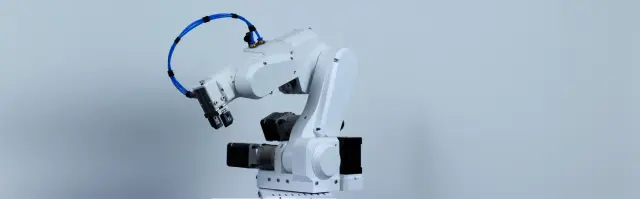

Arm RobotsPAROL6 Desktop Robot Arm

PAROL6 Desktop Robot Arm A high-performance 6-DOF desktop robotic arm designed to mirror industrial robots in mechanical design, control software, and usability — but small enough to sit on your desk. Designed by Petar Crnjak (Source Robotics). Released under GPLv3. STL files, control software, and GUI are all open-source. What you can do Run kinematic demos from your browser Practice pick-and-place with the included gripper attachments Learn industrial-style arm control (home, jog, teach points) Extend with your own gripper tooling (pneumatic, vacuum, 2-finger) Specs | | | |---|---| | Degrees of Freedom | 6 + gripper | | Joints | J1 base, J2 shoulder, J3 elbow, J4/J5 forearm, J6 wrist | | Payload | ~500g (typical) | | Reach | ~400mm | | Controller | Custom PAROL6 control board (STM32-based, PlatformIO) | | Motors | NEMA 17 stepper motors on joints | | License | GPLv3 (software + STLs) | Build options Two paths: 1. Buy a kit from Source Robotics — fully supported, pre-sourced parts 2. Source & print yourself — follow the BOM and Building instructions STL files This program includes a representative subset of 12 STLs covering BASE, SHOULDER, ELBOW, FOREARM, GRIPPER, and ESTOP groups. For the full 41-part canonical set (plus mounting plates and extras), see the STL directory on GitHub. Resources 📖 Official Docs 🎥 YouTube demo 🐍 Python API 🎛 Commander software 🤖 ROS2 / MoveIt simulation 💬 Discord community ⚠️ Safety PAROL6 involves lethal voltages and moving mechanical parts. Read the full SAFETY WARNING AND DISCLAIMER before assembling or operating. Attribution Source: PCrnjak/PAROL6-Desktop-robot-arm · License: GPLv3 · © Petar Crnjak / Source Robotics Printing PAROL6 is a 6-DOF desktop robot arm. The Print All set covers the full mechanical build: the base/electronics enclosure, the J1 turret/shoulder assembly, the J2 upper-arm joint, the upper arm and its covers, the elbow (J3/J4), the forearm and J5 wrist drive (pulleys, belt lids), the wrist, the E-stop housing, and a gripper attachment. Print one of each part. Most belts/pulleys are printed once; follow the upstream assembly guide for orientation and supports. Gripper options: this set includes GripperARMS plus the pneumatic and vacuum gripper holders as alternate end-effectors — choose the one that matches your hardware (you don't need all three). A second "horizontal pneumatic" gripper variant exists upstream and can be substituted if preferred. Important — one oversized part: the main "Upperarm.STL" (~33 MB) is the single largest part and exceeds this site's file-size limit, so it could not be hosted in the Print Files list. Download it directly from the upstream repository (PCrnjak/PAROL6-Desktop-robot-arm, under STL/UPPERARM/Upper_arm.STL) and print it alongside the parts here. Every other structural part of the arm is included.

from $350🛠 BoM Mobile Robots

Mobile Robotsmjbots Hoverbot

57 stars on GitHub · Apache 2.0 · Josh Pieper / mjbots A compact 2-wheeled robot built around surplus hoverboard hub motors for outdoor and semi-rugged terrain. Designed by Josh Pieper of mjbots. Source: https://github.com/mjbots/hoverbot Capabilities: Hoverboard hub motors (2×) driven by mjbots moteus-c1 brushless FOC controllers (CAN-FD) mjbots pi3hat handles IMU (gyro + accelerometer) and CAN-FD bus aggregation Raspberry Pi 4 runs control software via CAN-FD Fully 3D-printable PETG chassis with M3/M2.5 heat-set inserts Optional GoPro mount for first-person video Runs for hours; handles grass, gravel, and uneven pavement Sourcing heads-up: mjbots specialty parts only — moteus-c1, pi3hat, and powerdist are from mjbots.com, not Amazon 18V drill battery driving 36V-rated motors** — deliberately limited to ~2 m/s top speed, mechanically safe but not stock hoverboard performance

from $500🛠 BoM Arm Robots

Arm RobotsAssembler 0

3D-printed robot arms that can 3D-print their own structural parts

from $260🛠 BoM Mobile Robots

Mobile RobotsLeKiwi Mobile Base

LeKiwi — Mobile Base for SO-ARM100 LeKiwi is a three-wheeled omnidirectional mobile base designed to carry the SO-ARM100 (or its sibling Koch arm) around a real environment. Add a 5-DOF teleop arm on top, put a camera on the base and the wrist, and you have a low-cost mobile manipulation platform compatible with the entire LeRobot stack. Why "kiwi"? Kiwi drive — three omniwheels at 120° — gives you true holonomic motion: the base can translate in any direction and rotate independently, no slewing required. That's huge for teleop demos where a human operator is driving with a joystick and expects the base to move "like a spaceship," not like a car. What you get Three-wheel kiwi drive with omniwheels on custom-printed hubs Feetech servo drive (this Print All set uses the Feetech ; a Dynamixel-specific hub/mount set exists upstream if you use Dynamixel servos) Raspberry Pi + optional Jetson Orin compute cage printed onto the base plate Battery compartment sized for standard 3S/4S LiPo packs Servo-controller mount and base-mounted webcam holder Mounting pattern on the top plate that drops directly into an SO-ARM100 base What you do with it Mobile teleoperation: leader arm drives the follower arm, joystick drives the base. Stream both from a laptop anywhere on the network. Mobile imitation learning: extend LeRobot's episode recorder to include base velocities. The existing Diffusion Policy and ACT models have been adapted by the community to condition on base state. Multi-camera observation: base camera sees the environment, wrist camera sees the task. Both go into the policy. Classroom mobile robotics: cheap, printable, and works with commodity servos. Printed parts ~12 printed parts (sandwich base plate, 3× drive mounts + wheel hubs, Pi/Jetson cages, battery tray, camera mounts, SO-ARM100 base shim); see Print Files. Note: is a replacement SO-ARM100 base shim that lets the arm sit flush on the base plate — required if you are mounting the arm on this base. Attribution & license Designer: SIGRobotics-UIUC (Student Interest Group on Robotics, University of Illinois Urbana-Champaign) Upstream: https://github.com/SIGRobotics-UIUC/LeKiwi License: Apache 2.0 Ecosystem: LeRobot Links Upstream repo: https://github.com/SIGRobotics-UIUC/LeKiwi SO-ARM100 (arm to mount on top): https://github.com/TheRobotStudio/SO-ARM100 LeRobot stack: https://github.com/huggingface/lerobot Build Guide Step-by-step assembly guide: github.com/SIGRobotics-UIUC/LeKiwi/blob/main/Assembly.md --- Printing This Print All set is the Feetech build of the LeKiwi base — it maps to the root folder of the upstream repo (the , , and 5V/wired extras are intentionally excluded). | Part | Qty | Notes | |------|-----|-------| | baseplatelayer1.stl | 1 | Sandwich base, lower layer | | baseplatelayer2.stl | 1 | Sandwich base, upper layer | | drivemotormount.stl | 3 | One per wheel — kiwi drive has 3 wheels | | servowheelhub.stl | 3 | One per omniwheel hub | | batterymount.stl | 1 | 3S/4S LiPo tray | | servocontrollermount.stl | 1 | Waveshare bus-servo driver mount | | basecameramount.stl | 1 | Base webcam holder | | wristcameramount.stl | 1 | Wrist webcam holder | | modifiedbasearm.stl | 1 | SO-ARM100 base shim (required if mounting the arm) | | picasetop.stl | 1 | Raspberry Pi case (default compute) | | picasebottom.stl | 1 | Raspberry Pi case (default compute) | | jetsonholder.stl | 1 (optional) | Alternative compute mount — only if using a Jetson Orin instead of the Pi | Print-multiples: and each print x3 (three-wheel kiwi drive). Everything else is x1. Compute mount — pick one: the Raspberry Pi case ( + ) is the default and matches the BOM. The is a mutually-exclusive alternative for a Jetson Orin build — print it instead of the Pi case, not in addition. (The list keeps both so either build is possible; you don’t print all three.) Total for a standard Feetech + Pi build: 15 prints (9 single parts incl. the Pi case + 3× drive mount + 3× wheel hub; the Jetson holder is optional/alternative).

from $450🛠 BoM Arm Robots

Arm RobotsQubit — Dual-Arm Desktop Robot

Qubit: a dual-arm desktop robot with expressive 16×16 LED eyes

from $500🛠 BoM Arm Robots

Arm RobotsSO-ARM101 Standard Open Arm

SO-ARM101 — Standard Open Arm A 6-DOF open-source teleoperation arm system designed by The Robot Studio in collaboration with Hugging Face. SO-101 is the next-gen version of the SO-100 with improved wiring, easier assembly, and updated motors. Built to work seamlessly with the LeRobot library for end-to-end AI robotics research. What makes it interesting Leader + Follower teleoperation pair — move the leader arm, the follower mimics in real time LeRobot-native — record demonstrations, train imitation-learning policies, deploy to the arm Low cost — ~$110 per arm (~$220 for a leader+follower pair) in printed parts + motors Active community — dozens of vendors selling kits worldwide Specs | | | |---|---| | Degrees of Freedom | 6 per arm (leader + follower = 12 total) | | Motors | Feetech STS3215 servos | | Reach | ~420mm | | License | Apache 2.0 (code + hardware) | | Ecosystem | Hugging Face LeRobot | Build options Two paths: 1. Buy a kit — dozens of vendors listed in the README (PartaBot US, Seeed Studio, WowRobo, etc.) 2. Source & print yourself — follow the 3DPRINT.md guide and the Hugging Face Assembly Guide STL files This program includes 12 representative SO-101 individual parts (base, motor holders, arm links, wrist roll/pitch, gripper jaw, handle). For the full SO-101 printable set plus the SO-100 legacy parts, Optional accessories (cam mounts, bases, grippers), and Mini variant, browse the STL directory on GitHub. Note on catalog duplicates This page covers the standalone SO-ARM101 arm hardware. See also SO-101 Teleop Arm for the paired leader+follower teleop setup framing. Resources SO-101 Assembly Guide (HuggingFace) LeRobot library Discord community Printing guide (3DPRINT.md) The Robot Studio Attribution Source: TheRobotStudio/SO-ARM100 · License: Apache 2.0 · The Robot Studio / Hugging Face contributors Build Guide Official SO-101 / SO-ARM100 assembly guide: huggingface.co/docs/lerobot/so101 --- Printing This Print All set is the 12-part SO-101 build. A full SO-101 system is a leader + follower pair, so most structural parts print x2 (one per arm). A few parts are arm-specific: | Part | Per arm | Notes | |------|---------|-------| | BaseSO101.stl | x2 | Arm base — both arms | | BasemotorholderSO101.stl | x2 | Base motor mount — both arms | | MotorholderSO101Base.stl | x2 | Lower motor holder — both arms | | MotorholderSO101Wrist.stl | x2 | Wrist motor holder — both arms | | UnderarmSO101.stl | x2 | Lower arm link — both arms | | UpperarmSO101.stl | x2 | Upper arm link — both arms | | RotationPitchSO101.stl | x2 | Shoulder rotation/pitch — both arms | | WristRollPitchSO101.stl | x2 | Wrist roll/pitch — both arms | | WristRollSO101.stl | x1 | Leader wrist roll | | HandleSO101.stl | x1 | Leader handle (you grip this) | | WristRollFollowerSO101.stl | x1 | Follower wrist roll | | MovingJawSO101.stl | x1 | Follower gripper jaw | Leader vs follower (not alternates — print both): the leader arm is the one you move by hand, so it gets the + . The follower arm mimics it and does the gripping, so it gets the + . A complete teleop pair needs all 12 files. Single-arm build: if you only want one follower arm (no teleop), print one copy of the 8 shared structural parts plus the follower-specific + , and skip the leader + . Servos:** 12 Feetech STS3215 total (6 per arm) — mixed gear ratios per the BOM (7× 1/345, 2× 1/191, 3× 1/147). The full upstream STL directory also has SO-100 legacy parts, optional camera mounts/bases, and the Mini variant — not included here to keep the Print All set to the standard arm.

from $220🛠 BoM Mobile Robots

Mobile RobotsAlohaMini Dual-Arm Mobile

AlohaMini — Dual-Arm Mobile Manipulation Platform A miniaturized Aloha-style dual-arm mobile platform with a motorized vertical lift column. Two SO-ARM101 follower arms sit on a shared lift tower mounted to a three-wheel omni base, giving the platform a tabletop-to-floor reach envelope that makes real household-scale manipulation possible at hobbyist cost. What makes AlohaMini distinctive Most DIY bi-manual mobile bases have one big gap: they're too short. An SO-ARM on a fixed 20-cm pedestal can't pick up something on the floor or reach a countertop — the workspace is stuck at one height. AlohaMini solves this with a powered vertical lift that raises both arms together, scanning the full vertical reach of a human workspace. Paired with: Two SO-ARM101 follower arms (leader arms for teleop are also included in the STL set) A three-omniwheel kiwi drive base (bearing-mounted axles, printed chassis) Seeed Studio XIAO + WaveShare Dynamixel bus driver mounts Camera wrist mounts on both arms What you can do with it Imitation learning at floor and table height — record bimanual episodes where the arms need to descend to grab something off the floor, then lift to place it on a surface. Mobile bimanual teleop — pair with two leader arms (also in the STL set) to drive both follower arms plus the base from a single operator. VLA training data collection — the shared base frame + lift + dual arms is close to the canonical "robot" embodiment used in recent VLA papers (RT-2, OpenVLA). Household tasks — clearing a dining table, loading a dishwasher, or picking laundry off the floor — all things a fixed-height arm can't attempt. Printed parts ~60 STLs — dual SO-ARM101 arms (follower grippers + leader handles), motorized lift column, 3-omniwheel base, battery tray, VR teleop handles; see Print Files. Attribution & license Designer: Yi-Teng Li (liyiteng) Upstream: https://github.com/liyiteng/AlohaMini License: Apache 2.0 Built on: SO-ARM101, LeKiwi base geometry, and the ALOHA/ALOHA2 dual-arm research lineage from Stanford Links Upstream: https://github.com/liyiteng/AlohaMini LeRobot: https://github.com/huggingface/lerobot ALOHA paper: https://tonyzhaozh.github.io/aloha/ Build Guide Hardware assembly guide (BOM, wiring, mechanical assembly): github.com/liyiteng/AlohaMini/blob/main/docs/hardwareassembly.md Printing This is one complete dual-arm mobile platform: two SO-ARM101 follower arms + two leader arms (for teleop) on a shared lift column over a 3-omniwheel base. The 20 STLs use a role-prefix naming scheme, and most are printed in multiples — printing one of each gives only a fraction of a build. Quantities below are per platform: — shared SO-ARM101 arm parts (print 4× each: 2 followers + 2 leaders): DBaseSO101Lekiwi, DBasemotorholderSO101, DMotorholderSO101Base, DMotorholderSO101Wrist, DRotationPitchSO101, DUnderarmSO101, DUpperarmSO101, DWristRollPitchSO101, DWristRollSO101 These are the common arm-segment parts every SO-ARM101 needs, so each is printed ×4 (one per arm). The two controller-mount plates are an either/or per your electronics: DSeeedstudioMountingPlate (Seeed XIAO) vs DWaveShareMountingPlate (WaveShare bus driver) — print whichever board(s) you use, ×4 if used on all arms. — follower-only parts (print 2×: one per follower arm): FFollowerMovingJawSO101, FFollowerWristRollFollowerSO101, FFollowerSO-ARM101camerawristmount — leader-only parts (print 2×: one per leader/teleop handle): LLeaderHandleSO101, LLeaderTriggerSO101 — omni-base (kiwi drive, 3 wheels): OBChassisServoMount ×3, OBChassisBearingCover ×3, OBChassisShaftSleeve1224 ×3 (one set per omni wheel) OBChassisSidePanel ×2–3 (chassis sides — match to your frame) So the 20 unique STLs expand to roughly ~60 physical prints (≈4 kg PLA per the BOM). Servo count: 16× STS3215-C018 (4 base + 12 follower arms) and 12× STS3215-C046 (leader arms). Leader and follower parts are both required for teleop data collection — they are not** alternates. Note: the lift-column structural parts referenced in the build guide are large and may be cut/printed per the upstream folder; this Print All set covers the arm, gripper, base and teleop-handle parts.

from $750🛠 BoM Mobile Robots

Mobile RobotsSpot Micro Quadruped — Jetson Nano (ROS Melodic)

Spot Micro Quadruped — Jetson Nano / ROS Melodic Port > Jetson-Nano-specific port of the SpotMicro design family. See also: SpotMicro (Pi) and SpotMicro ESP32 entries on orobot for other compute targets. A 12-servo, 4-legged 3D-printed open-source quadruped running ROS Melodic on an NVIDIA Jetson Nano. This fork () ports the original Raspberry Pi 3B + ROS Kinetic stack onto the Jetson Nano with ROS Melodic, unlocking the GPU for on-board SLAM, perception, and future learned-policy work. The robot supports sit, stand, body angle, and walk control via two configurable gaits (8-phase stable gait by default; faster trot gait optional). Body-mounted RPLidar A1 enables real-time SLAM and 2D mapping. State is published over tf2 with open-loop calculated odometry. Printing This is a 12-DOF quadruped with four legs, but the STL list holds only one left-leg set and one right-leg set. To build the whole robot you must print the leg parts in the quantities below (the body parts are single). Body — print x1 each: , , (3-piece shell) (base plate) Left legs — print x2 each (2 left legs): , , , Right legs — print x2 each (2 right legs): , , , That is 4 complete legs (one STS/MG-class servo per joint, 3 per leg = 12 DOF). Left and right parts are true mirrors, not interchangeable. Optional repo extras (lidar mount, chassis reinforcements) and the status LCD are not part of this print set. Hardware Compute: NVIDIA Jetson Nano (this port). Original target was Raspberry Pi 3B. Frame: Thingiverse Spot Micro (KDY0523) — thing:3445283 Servos: 12× PDI-HV5523MG (or cls6336hv — print files compatible) Servo control: PCA9685, i2c Power: 2S 4000 mAh LiPo direct to servo board; HKU5 5V/5A UBEC for Jetson + peripherals Sensing: RPLidar A1 (body-mounted) Optional: 16×2 i2c LCD panel for state readout Software stack OS: Ubuntu 18.04 (for ROS Melodic on Jetson Nano). Original used Ubuntu 16.04 + ROS Kinetic. Framework: ROS Melodic catkin workspace Languages: C++ (motion control, kinematics) + Python (keyboard command, plot) Key packages: , , (URDF), , , Build flow 1. Flash Jetson Nano with Ubuntu 18.04 + ROS Melodic. Add a 1 GB SWAP partition (catkin will OOM without it). 2. Create a catkin workspace, clone this repo into , run . 3. . 4. . 5. Calibrate all 12 servos using the spreadsheet + workflow before powering the legs. 6. on the Jetson; from a remote machine. Family cross-reference This is one of three SpotMicro variants on orobot — pick the compute target that matches your build: SpotMicro (Raspberry Pi) — original , Pi 3B + ROS Kinetic. SpotMicro ESP32 — , microcontroller-only port without ROS. SpotMicro Jetson Nano (this entry) — Jetson Nano + ROS Melodic, GPU-accelerated SLAM. Source Repo: https://github.com/0x49b/spotMicro-ROS-Melodic-Jetson-Nano Commit: 8c027c8a357dceace856d586022954205bc247ed License: MIT Upstream: (this is a Jetson Nano fork)

from $480🛠 BoM Mobile Robots

Mobile RobotsJetBot — NVIDIA Jetson AI Rover

JetBot — NVIDIA Jetson AI Rover JetBot is an open-source AI robot platform built on the NVIDIA Jetson Nano. It combines a small two-wheeled chassis with a Jetson Nano compute module, a wide-angle camera, and the full NVIDIA JetPack SDK — enabling object following, collision avoidance, and road-following using convolutional neural networks trained with Jupyter notebooks. JetBot is NVIDIA's official introductory robotics platform, making it one of the most well-documented and widely-built AI robots for beginners and educators. > Note: The original Jetson Nano is end-of-life / discontinued. New builders should target the Jetson Orin Nano instead — verify JetPack and software compatibility before purchasing, as some JetBot tutorials and libraries are written for the original Nano and may require updates. What It Can Do Object following — tracks a sport ball or colored object using a CNN trained in-browser with Jupyter Collision avoidance — learns to navigate around obstacles via a head-mounted classifier Road following — stays on a track by regressing a steering angle from a front camera Teleoperation — browser gamepad control via the interactive widget interface Related platforms JetRacer — NVIDIA's RC-car variant with steering servo for high-speed track following JetCar — community variant with larger chassis for heavier payloads Spot Micro Jetson — quadruped variant using Jetson Nano for onboard inference Source Repo: https://github.com/NVIDIA-AI-IOT/jetbot License: MIT Docs: https://jetbot.org JetPack SDK: https://developer.nvidia.com/embedded/jetpack --- Printing This Print All set maps 1:1 to the upstream folder. A complete JetBot needs 4 printed parts: the chassis, the camera mount, and one caster base + one matching caster shroud. | Part | Qty | Notes | |------|-----|-------| | chassis.stl | 1 | Main two-wheel body | | cameramount.stl | 1 | Wide-angle camera bracket | | casterbase60mm.stl or casterbase65mm.stl | 1 | Pick one — match your caster ball size | | castershroud60mm.stl or castershroud65mm.stl | 1 | Pick one — must match the caster base you chose | Caster size — pick one matched pair (do not print all four). JetBot ships in two caster sizes: 60 mm and 65 mm. Choose the size that matches the caster ball/wheel in your kit, and print the base + shroud of the same size together*. Mixing a 60 mm base with a 65 mm shroud will not fit. The list intentionally keeps both sizes so either kit works — a blind “Print All” would produce 2 unused parts. There is no upstream-designated default size, so the choice depends on your hardware. If unsure, the 65 mm caster is the more common in recent JetBot kits.

from $250🛠 BoMBrowse by type